- Driver Downloads. Find available Marvell drivers by Platform or Part Number. Driver Downloads. Marvell Drivers.

- Having an issue with your display, audio, or touchpad? Whether you're working on an Alienware, Inspiron, Latitude, or other Dell product, driver updates keep your device running at top performance. Step 1: Identify your product above. Step 2: Run the detect drivers scan to see available updates. Step 3: Choose which driver updates to install.

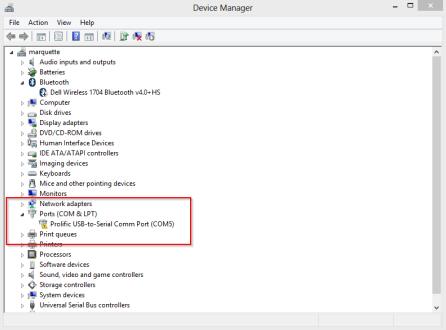

- Download Linux Port Devices Drivers

- Download Linux Port Devices Driver Updater

- Download Linux Port Devices Driver Windows 7

- Download Linux Port Devices Driver Windows 10

Download Linux Port Devices Drivers

COMPortAssignment is a free utility that is used for assigning the COM Port numbers of FTDI devices. It runs under Windows XP, Vista and Windows 7. COMPortAssignment utility is available for download as a.zip file by clicking here.

| Description | Type | OS | Version | Date |

|---|---|---|---|---|

| Administrative Tools for Intel® Network Adapters This download record installs version 26.0 of the administrative tools for Intel® Network Adapters. | Software | OS Independent Linux* | 26.0 Latest | 2/1/2021 |

| Intel® Network Adapters Driver for PCIe* 10 Gigabit Network Connections Under FreeBSD* This release includes the 10 gigabit FreeBSD* Base Driver for Intel® Network Connections. | Driver | FreeBSD* | 3.3.22 Latest | 2/1/2021 |

| Intel® Ethernet Adapter Complete Driver Pack This download installs version 26.0 of the Intel® Ethernet Adapter Complete Driver Pack for supported OS versions. | Driver | OS Independent | 26.0 Latest | 2/1/2021 |

| Intel® Ethernet Connections Boot Utility, Preboot Images, and EFI Drivers This download version 26.0 installs UEFI drivers, Intel® Boot Agent, and Intel® iSCSI Remote Boot images to program the PCI option ROM flash image and update flash configuration options. | Software | OS Independent Linux* | 26.0 Latest | 2/1/2021 |

| Intel® Network Adapter Driver for Windows Server 2012* This download record installs version 26.0 of the Intel® Network Adapters driver for Windows Server 2012*. | Driver | Windows Server 2012* | 26.0 Latest | 2/1/2021 |

| Intel® Network Adapter Driver for Windows Server 2012 R2* This download installs version 26.0 of the Intel® Network Adapters for Windows Server 2012 R2*. | Driver | Windows Server 2012 R2* | 26.0 Latest | 2/1/2021 |

| Intel® Network Adapter Linux* Virtual Function Driver for Intel® Ethernet Controller 700 and E810 Series This release includes iavf Linux* Virtual Function Drivers for Intel® Ethernet Network devices based on 700 and E810 Series controllers. | Driver | Linux* | 4.0.2 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Network Adapter 700 Series Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Network Adapter 700 Series. | Firmware | OS Independent | 8.20 Latest | 2/1/2021 |

| Intel® Network Adapter Driver for Intel® Ethernet Controller 700 Series under FreeBSD* This release includes FreeBSD Base Drivers for Intel® Ethernet Network Connections. Supporting devices based on the 700 series controllers. | Driver | FreeBSD* | 1.12.13 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—Windows* Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—Windows*. | Firmware | OS Independent | 8.20 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—Linux* Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—Linux*. | Firmware | Linux* | 8.20 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—FreeBSD* Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—FreeBSD*. | Firmware | FreeBSD* | 8.20 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—VMware ESX* Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—VMware ESX*. | Firmware | VMware* | 8.20 Latest | 2/1/2021 |

| Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—EFI Provides the Non-Volatile Memory (NVM) Update Utility for Intel® Ethernet Adapters 700 Series—EFI. | Firmware | OS Independent | 8.20 Latest | 2/1/2021 |

| Intel® Network Adapter Driver for Windows Server 2016* This download record installs version 26.0 of the Intel® Network Adapter using Windows Server 2016*. | Driver | Windows Server 2016* | 26.0 Latest | 2/1/2021 |

| Intel® Network Adapter Driver for Windows Server 2019* This download record installs version 26.0 of the Intel® Network Adapter using Windows Server 2019*. | Driver | Windows Server 2019* | 26.0 Latest | 2/1/2021 |

| Adapter User Guide for Intel® Ethernet Adapters This download contains the 26.0 version of the Intel® Ethernet Adapter User Guide. | Driver | OS Independent | 26.0 Latest | 2/1/2021 |

| Intel® Ethernet Product Software Release Notes Provides Intel® Ethernet Product Software Release Notes (26.0). | Driver | OS Independent | 26.0 Latest | 2/1/2021 |

| NVM Downgrade Package for Intel® Ethernet Adapters 700 Series (8.20 to 8.15 Only) Provides the NVM downgrade package for Intel® Ethernet Adapters 700 Series. (8.20 to 8.15 Only) | Firmware | OS Independent | 8.20 to 8.15 Latest | 2/1/2021 |

| NVM Downgrade Package for Intel® Ethernet Adapters 700 Series (8.20 to 8.10 Only) Provides the NVM downgrade package for Intel® Ethernet Adapters 700 Series. (8.20 to 8.10 Only) | Firmware | OS Independent | 8.20 to 8.10 Latest | 2/1/2021 |

| Authors: |

|

|---|

The world of PCI is vast and full of (mostly unpleasant) surprises.Since each CPU architecture implements different chip-sets and PCI deviceshave different requirements (erm, “features”), the result is the PCI supportin the Linux kernel is not as trivial as one would wish. This short papertries to introduce all potential driver authors to Linux APIs forPCI device drivers.

A more complete resource is the third edition of “Linux Device Drivers”by Jonathan Corbet, Alessandro Rubini, and Greg Kroah-Hartman.LDD3 is available for free (under Creative Commons License) from:https://lwn.net/Kernel/LDD3/.

However, keep in mind that all documents are subject to “bit rot”.Refer to the source code if things are not working as described here.

Please send questions/comments/patches about Linux PCI API to the“Linux PCI” <linux-pci@atrey.karlin.mff.cuni.cz> mailing list.

1.1. Structure of PCI drivers¶

PCI drivers “discover” PCI devices in a system via pci_register_driver().Actually, it’s the other way around. When the PCI generic code discoversa new device, the driver with a matching “description” will be notified.Details on this below.

pci_register_driver() leaves most of the probing for devices tothe PCI layer and supports online insertion/removal of devices [thussupporting hot-pluggable PCI, CardBus, and Express-Card in a single driver].pci_register_driver() call requires passing in a table of functionpointers and thus dictates the high level structure of a driver.

Once the driver knows about a PCI device and takes ownership, thedriver generally needs to perform the following initialization:

- Enable the device

- Request MMIO/IOP resources

- Set the DMA mask size (for both coherent and streaming DMA)

- Allocate and initialize shared control data (pci_allocate_coherent())

- Access device configuration space (if needed)

- Register IRQ handler (

request_irq()) - Initialize non-PCI (i.e. LAN/SCSI/etc parts of the chip)

- Enable DMA/processing engines

When done using the device, and perhaps the module needs to be unloaded,the driver needs to take the follow steps:

- Disable the device from generating IRQs

- Release the IRQ (

free_irq()) - Stop all DMA activity

- Release DMA buffers (both streaming and coherent)

- Unregister from other subsystems (e.g. scsi or netdev)

- Release MMIO/IOP resources

- Disable the device

Most of these topics are covered in the following sections.For the rest look at LDD3 or <linux/pci.h> .

If the PCI subsystem is not configured (CONFIG_PCI is not set), most ofthe PCI functions described below are defined as inline functions eithercompletely empty or just returning an appropriate error codes to avoidlots of ifdefs in the drivers.

1.2. pci_register_driver() call¶

PCI device drivers call pci_register_driver() during theirinitialization with a pointer to a structure describing the driver(structpci_driver):

pci_driver¶PCI driver structure

Definition

Members

node- List of driver structures.

name- Driver name.

id_table- Pointer to table of device IDs the driver isinterested in. Most drivers should export thistable using MODULE_DEVICE_TABLE(pci,…).

probe- This probing function gets called (during executionof pci_register_driver() for already existingdevices or later if a new device gets inserted) forall PCI devices which match the ID table and are not“owned” by the other drivers yet. This function getspassed a “struct pci_dev *” for each device whoseentry in the ID table matches the device. The probefunction returns zero when the driver chooses totake “ownership” of the device or an error code(negative number) otherwise.The probe function always gets called from processcontext, so it can sleep.

remove- The remove() function gets called whenever a devicebeing handled by this driver is removed (either duringderegistration of the driver or when it’s manuallypulled out of a hot-pluggable slot).The remove function always gets called from processcontext, so it can sleep.

suspend- Put device into low power state.

resume- Wake device from low power state.(Please see PCI Power Management for descriptionsof PCI Power Management and the related functions.)

shutdown- Hook into reboot_notifier_list (kernel/sys.c).Intended to stop any idling DMA operations.Useful for enabling wake-on-lan (NIC) or changingthe power state of a device before reboot.e.g. drivers/net/e100.c.

sriov_configure- Optional driver callback to allow configuration ofnumber of VFs to enable via sysfs “sriov_numvfs” file.

err_handler- See PCI Error Recovery

groups- Sysfs attribute groups.

driver- Driver model structure.

dynids- List of dynamically added device IDs.

The ID table is an array of structpci_device_id entries ending with anall-zero entry. Definitions with static const are generally preferred.

pci_device_id¶PCI device ID structure

Definition

Members

vendor- Vendor ID to match (or PCI_ANY_ID)

device- Device ID to match (or PCI_ANY_ID)

subvendor- Subsystem vendor ID to match (or PCI_ANY_ID)

subdevice- Subsystem device ID to match (or PCI_ANY_ID)

class- Device class, subclass, and “interface” to match.See Appendix D of the PCI Local Bus Spec orinclude/linux/pci_ids.h for a full list of classes.Most drivers do not need to specify class/class_maskas vendor/device is normally sufficient.

class_mask- Limit which sub-fields of the class field are compared.See drivers/scsi/sym53c8xx_2/ for example of usage.

driver_data- Data private to the driver.Most drivers don’t need to use driver_data field.Best practice is to use driver_data as an indexinto a static list of equivalent device types,instead of using it as a pointer.

Most drivers only need PCI_DEVICE() or PCI_DEVICE_CLASS() to set upa pci_device_id table.

New PCI IDs may be added to a device driver pci_ids table at runtimeas shown below:

All fields are passed in as hexadecimal values (no leading 0x).The vendor and device fields are mandatory, the others are optional. Usersneed pass only as many optional fields as necessary:

- subvendor and subdevice fields default to PCI_ANY_ID (FFFFFFFF)

- class and classmask fields default to 0

- driver_data defaults to 0UL.

Note that driver_data must match the value used by any of the pci_device_identries defined in the driver. This makes the driver_data field mandatoryif all the pci_device_id entries have a non-zero driver_data value.

Once added, the driver probe routine will be invoked for any unclaimedPCI devices listed in its (newly updated) pci_ids list.

When the driver exits, it just calls pci_unregister_driver() and the PCI layerautomatically calls the remove hook for all devices handled by the driver.

1.2.1. “Attributes” for driver functions/data¶

Please mark the initialization and cleanup functions where appropriate(the corresponding macros are defined in <linux/init.h>):

| __init | Initialization code. Thrown away after the driverinitializes. |

| __exit | Exit code. Ignored for non-modular drivers. |

- The

module_init()/module_exit()functions (and allinitialization functions called _only_ from these)should be marked __init/__exit. - Do not mark the

structpci_driver. - Do NOT mark a function if you are not sure which mark to use.Better to not mark the function than mark the function wrong.

1.3. How to find PCI devices manually¶

PCI drivers should have a really good reason for not using thepci_register_driver() interface to search for PCI devices.The main reason PCI devices are controlled by multiple driversis because one PCI device implements several different HW services.E.g. combined serial/parallel port/floppy controller.

A manual search may be performed using the following constructs:

Searching by vendor and device ID:

Searching by class ID (iterate in a similar way):

Searching by both vendor/device and subsystem vendor/device ID:

You can use the constant PCI_ANY_ID as a wildcard replacement forVENDOR_ID or DEVICE_ID. This allows searching for any device from aspecific vendor, for example.

These functions are hotplug-safe. They increment the reference count onthe pci_dev that they return. You must eventually (possibly at module unload)decrement the reference count on these devices by calling pci_dev_put().

1.4. Device Initialization Steps¶

As noted in the introduction, most PCI drivers need the following stepsfor device initialization:

- Enable the device

- Request MMIO/IOP resources

- Set the DMA mask size (for both coherent and streaming DMA)

- Allocate and initialize shared control data (pci_allocate_coherent())

- Access device configuration space (if needed)

- Register IRQ handler (

request_irq()) - Initialize non-PCI (i.e. LAN/SCSI/etc parts of the chip)

- Enable DMA/processing engines.

The driver can access PCI config space registers at any time.(Well, almost. When running BIST, config space can go away…butthat will just result in a PCI Bus Master Abort and config readswill return garbage).

Download Linux Port Devices Driver Updater

1.4.1. Enable the PCI device¶

Before touching any device registers, the driver needs to enablethe PCI device by calling pci_enable_device(). This will:

- wake up the device if it was in suspended state,

- allocate I/O and memory regions of the device (if BIOS did not),

- allocate an IRQ (if BIOS did not).

Note

pci_enable_device() can fail! Check the return value.

Warning

OS BUG: we don’t check resource allocations before enabling thoseresources. The sequence would make more sense if we calledpci_request_resources() before calling pci_enable_device().Currently, the device drivers can’t detect the bug when twodevices have been allocated the same range. This is not a commonproblem and unlikely to get fixed soon.

This has been discussed before but not changed as of 2.6.19:https://lore.kernel.org/r/20060302180025.GC28895@flint.arm.linux.org.uk/

pci_set_master() will enable DMA by setting the bus master bitin the PCI_COMMAND register. It also fixes the latency timer value ifit’s set to something bogus by the BIOS. pci_clear_master() willdisable DMA by clearing the bus master bit.

If the PCI device can use the PCI Memory-Write-Invalidate transaction,call pci_set_mwi(). This enables the PCI_COMMAND bit for Mem-Wr-Invaland also ensures that the cache line size register is set correctly.Check the return value of pci_set_mwi() as not all architecturesor chip-sets may support Memory-Write-Invalidate. Alternatively,if Mem-Wr-Inval would be nice to have but is not required, callpci_try_set_mwi() to have the system do its best effort at enablingMem-Wr-Inval.

1.4.2. Request MMIO/IOP resources¶

Memory (MMIO), and I/O port addresses should NOT be read directlyfrom the PCI device config space. Use the values in the pci_dev structureas the PCI “bus address” might have been remapped to a “host physical”address by the arch/chip-set specific kernel support.

See The io_mapping functions for how to access device registersor device memory.

The device driver needs to call pci_request_region() to verifyno other device is already using the same address resource.Conversely, drivers should call pci_release_region() AFTERcalling pci_disable_device().The idea is to prevent two devices colliding on the same address range.

Tip

See OS BUG comment above. Currently (2.6.19), The driver can onlydetermine MMIO and IO Port resource availability _after_ callingpci_enable_device().

Generic flavors of pci_request_region() are request_mem_region()(for MMIO ranges) and request_region() (for IO Port ranges).Use these for address resources that are not described by “normal” PCIBARs.

Also see pci_request_selected_regions() below.

1.4.3. Set the DMA mask size¶

Note

If anything below doesn’t make sense, please refer toDynamic DMA mapping using the generic device. This section is just a reminder thatdrivers need to indicate DMA capabilities of the device and is notan authoritative source for DMA interfaces.

While all drivers should explicitly indicate the DMA capability(e.g. 32 or 64 bit) of the PCI bus master, devices with more than32-bit bus master capability for streaming data need the driverto “register” this capability by calling pci_set_dma_mask() withappropriate parameters. In general this allows more efficient DMAon systems where System RAM exists above 4G _physical_ address.

Drivers for all PCI-X and PCIe compliant devices must callpci_set_dma_mask() as they are 64-bit DMA devices.

Similarly, drivers must also “register” this capability if the devicecan directly address “consistent memory” in System RAM above 4G physicaladdress by calling pci_set_consistent_dma_mask().Again, this includes drivers for all PCI-X and PCIe compliant devices.Many 64-bit “PCI” devices (before PCI-X) and some PCI-X devices are64-bit DMA capable for payload (“streaming”) data but not control(“consistent”) data.

1.4.4. Setup shared control data¶

Once the DMA masks are set, the driver can allocate “consistent” (a.k.a. shared)memory. See Dynamic DMA mapping using the generic device for a full description ofthe DMA APIs. This section is just a reminder that it needs to be donebefore enabling DMA on the device.

1.4.5. Initialize device registers¶

Some drivers will need specific “capability” fields programmedor other “vendor specific” register initialized or reset.E.g. clearing pending interrupts.

1.4.6. Register IRQ handler¶

While calling request_irq() is the last step described here,this is often just another intermediate step to initialize a device.This step can often be deferred until the device is opened for use.

All interrupt handlers for IRQ lines should be registered with IRQF_SHAREDand use the devid to map IRQs to devices (remember that all PCI IRQ linescan be shared).

request_irq() will associate an interrupt handler and device handlewith an interrupt number. Historically interrupt numbers representIRQ lines which run from the PCI device to the Interrupt controller.With MSI and MSI-X (more below) the interrupt number is a CPU “vector”.

request_irq() also enables the interrupt. Make sure the device isquiesced and does not have any interrupts pending before registeringthe interrupt handler.

MSI and MSI-X are PCI capabilities. Both are “Message Signaled Interrupts”which deliver interrupts to the CPU via a DMA write to a Local APIC.The fundamental difference between MSI and MSI-X is how multiple“vectors” get allocated. MSI requires contiguous blocks of vectorswhile MSI-X can allocate several individual ones.

MSI capability can be enabled by calling pci_alloc_irq_vectors() with thePCI_IRQ_MSI and/or PCI_IRQ_MSIX flags before calling request_irq(). Thiscauses the PCI support to program CPU vector data into the PCI devicecapability registers. Many architectures, chip-sets, or BIOSes do NOTsupport MSI or MSI-X and a call to pci_alloc_irq_vectors with justthe PCI_IRQ_MSI and PCI_IRQ_MSIX flags will fail, so try to alwaysspecify PCI_IRQ_LEGACY as well.

Drivers that have different interrupt handlers for MSI/MSI-X andlegacy INTx should chose the right one based on the msi_enabledand msix_enabled flags in the pci_dev structure after callingpci_alloc_irq_vectors.

There are (at least) two really good reasons for using MSI:

- MSI is an exclusive interrupt vector by definition.This means the interrupt handler doesn’t have to verifyits device caused the interrupt.

- MSI avoids DMA/IRQ race conditions. DMA to host memory is guaranteedto be visible to the host CPU(s) when the MSI is delivered. Thisis important for both data coherency and avoiding stale control data.This guarantee allows the driver to omit MMIO reads to flushthe DMA stream.

See drivers/infiniband/hw/mthca/ or drivers/net/tg3.c for examplesof MSI/MSI-X usage.

1.5. PCI device shutdown¶

When a PCI device driver is being unloaded, most of the followingsteps need to be performed:

- Disable the device from generating IRQs

- Release the IRQ (

free_irq()) - Stop all DMA activity

- Release DMA buffers (both streaming and consistent)

- Unregister from other subsystems (e.g. scsi or netdev)

- Disable device from responding to MMIO/IO Port addresses

- Release MMIO/IO Port resource(s)

1.5.1. Stop IRQs on the device¶

How to do this is chip/device specific. If it’s not done, it opensthe possibility of a “screaming interrupt” if (and only if)the IRQ is shared with another device.

When the shared IRQ handler is “unhooked”, the remaining devicesusing the same IRQ line will still need the IRQ enabled. Thus if the“unhooked” device asserts IRQ line, the system will respond assumingit was one of the remaining devices asserted the IRQ line. Since noneof the other devices will handle the IRQ, the system will “hang” untilit decides the IRQ isn’t going to get handled and masks the IRQ (100,000iterations later). Once the shared IRQ is masked, the remaining deviceswill stop functioning properly. Not a nice situation.

This is another reason to use MSI or MSI-X if it’s available.MSI and MSI-X are defined to be exclusive interrupts and thusare not susceptible to the “screaming interrupt” problem.

1.5.2. Release the IRQ¶

Once the device is quiesced (no more IRQs), one can call free_irq().This function will return control once any pending IRQs are handled,“unhook” the drivers IRQ handler from that IRQ, and finally releasethe IRQ if no one else is using it.

1.5.3. Stop all DMA activity¶

It’s extremely important to stop all DMA operations BEFORE attemptingto deallocate DMA control data. Failure to do so can result in memorycorruption, hangs, and on some chip-sets a hard crash.

Stopping DMA after stopping the IRQs can avoid races where theIRQ handler might restart DMA engines.

While this step sounds obvious and trivial, several “mature” driversdidn’t get this step right in the past.

1.5.4. Release DMA buffers¶

Once DMA is stopped, clean up streaming DMA first.I.e. unmap data buffers and return buffers to “upstream”owners if there is one.

Then clean up “consistent” buffers which contain the control data.

See Dynamic DMA mapping using the generic device for details on unmapping interfaces.

1.5.5. Unregister from other subsystems¶

Most low level PCI device drivers support some other subsystemlike USB, ALSA, SCSI, NetDev, Infiniband, etc. Make sure yourdriver isn’t losing resources from that other subsystem.If this happens, typically the symptom is an Oops (panic) whenthe subsystem attempts to call into a driver that has been unloaded.

1.5.6. Disable Device from responding to MMIO/IO Port addresses¶

io_unmap() MMIO or IO Port resources and then call pci_disable_device().This is the symmetric opposite of pci_enable_device().Do not access device registers after calling pci_disable_device().

1.5.7. Release MMIO/IO Port Resource(s)¶

Call pci_release_region() to mark the MMIO or IO Port range as available.Failure to do so usually results in the inability to reload the driver.

1.6. How to access PCI config space¶

You can use pci_(read|write)_config_(byte|word|dword) to access the configspace of a device represented by struct pci_dev *. All these functions return0 when successful or an error code (PCIBIOS_…) which can be translated to atext string by pcibios_strerror. Most drivers expect that accesses to valid PCIdevices don’t fail.

If you don’t have a struct pci_dev available, you can callpci_bus_(read|write)_config_(byte|word|dword) to access a given deviceand function on that bus.

If you access fields in the standard portion of the config header, pleaseuse symbolic names of locations and bits declared in <linux/pci.h>.

If you need to access Extended PCI Capability registers, just callpci_find_capability() for the particular capability and it will find thecorresponding register block for you.

1.7. Other interesting functions¶

pci_get_domain_bus_and_slot() | Find pci_dev corresponding to given domain,bus and slot and number. If the device isfound, its reference count is increased. |

pci_set_power_state() | Set PCI Power Management state (0=D0 … 3=D3) |

pci_find_capability() | Find specified capability in device’s capabilitylist. |

| pci_resource_start() | Returns bus start address for a given PCI region |

| pci_resource_end() | Returns bus end address for a given PCI region |

| pci_resource_len() | Returns the byte length of a PCI region |

| pci_set_drvdata() | Set private driver data pointer for a pci_dev |

| pci_get_drvdata() | Return private driver data pointer for a pci_dev |

pci_set_mwi() | Enable Memory-Write-Invalidate transactions. |

pci_clear_mwi() | Disable Memory-Write-Invalidate transactions. |

1.8. Miscellaneous hints¶

When displaying PCI device names to the user (for example when a driver wantsto tell the user what card has it found), please use pci_name(pci_dev).

Always refer to the PCI devices by a pointer to the pci_dev structure.All PCI layer functions use this identification and it’s the onlyreasonable one. Don’t use bus/slot/function numbers except for veryspecial purposes – on systems with multiple primary buses their semanticscan be pretty complex.

Don’t try to turn on Fast Back to Back writes in your driver. All deviceson the bus need to be capable of doing it, so this is something which needsto be handled by platform and generic code, not individual drivers.

1.9. Vendor and device identifications¶

Do not add new device or vendor IDs to include/linux/pci_ids.h unless theyare shared across multiple drivers. You can add private definitions inyour driver if they’re helpful, or just use plain hex constants.

Download Linux Port Devices Driver Windows 7

The device IDs are arbitrary hex numbers (vendor controlled) and normally usedonly in a single location, the pci_device_id table.

Please DO submit new vendor/device IDs to https://pci-ids.ucw.cz/.There’s a mirror of the pci.ids file at https://github.com/pciutils/pciids.

1.10. Obsolete functions¶

There are several functions which you might come across when trying toport an old driver to the new PCI interface. They are no longer presentin the kernel as they aren’t compatible with hotplug or PCI domains orhaving sane locking.

| pci_find_device() | Superseded by pci_get_device() |

| pci_find_subsys() | Superseded by pci_get_subsys() |

| pci_find_slot() | Superseded by pci_get_domain_bus_and_slot() |

pci_get_slot() | Superseded by pci_get_domain_bus_and_slot() |

The alternative is the traditional PCI device driver that walks PCIdevice lists. This is still possible but discouraged.

1.11. MMIO Space and “Write Posting”¶

Download Linux Port Devices Driver Windows 10

Converting a driver from using I/O Port space to using MMIO spaceoften requires some additional changes. Specifically, “write posting”needs to be handled. Many drivers (e.g. tg3, acenic, sym53c8xx_2)already do this. I/O Port space guarantees write transactions reach the PCIdevice before the CPU can continue. Writes to MMIO space allow the CPUto continue before the transaction reaches the PCI device. HW weeniescall this “Write Posting” because the write completion is “posted” tothe CPU before the transaction has reached its destination.

Thus, timing sensitive code should add readl() where the CPU isexpected to wait before doing other work. The classic “bit banging”sequence works fine for I/O Port space:

The same sequence for MMIO space should be:

It is important that “safe_mmio_reg” not have any side effects thatinterferes with the correct operation of the device.

Another case to watch out for is when resetting a PCI device. Use PCIConfiguration space reads to flush the writel(). This will gracefullyhandle the PCI master abort on all platforms if the PCI device isexpected to not respond to a readl(). Most x86 platforms will allowMMIO reads to master abort (a.k.a. “Soft Fail”) and return garbage(e.g. ~0). But many RISC platforms will crash (a.k.a.”Hard Fail”).